Credit markets update:

Entering the Black Hole: Understanding AI’s 30 GW Threshold

AI has entered a phase where ambition outstrips intuition.

The sector’s leading figures speak openly about energy requirements on a national scale, including Sam Altman’s proposal for a 30 GW compute cluster. Such numbers are often repeated but rarely understood. Are they signs of a speculative bubble, a rational response to future demand, or the early stages of an economic transition we are poorly equipped to analyse?

This report explores the meaning of 30 GW in concrete terms, situates it within historical patterns of technological change, and outlines why non-linear effects complicate attempts to assess the risks in the current AI capex supercycle. As we show, the 30 GW figure is best understood not as a sign of excess, but as the approximate scale needed simply to reach the next capability threshold.

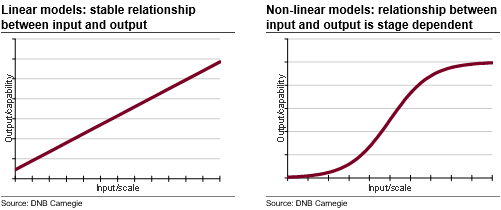

Linear vs non-linear systems

Most systems and technologies scale linearly. Add more machines or workers and you get a certain increase in output. Food intake and body weight tend to move together for a given level of physical effort. Increasing the amount of RAM in a PC results in faster boot-up and shutdown times, as well as smoother program launches and task executions. These relationships are intuitive because they are proportional: input leads to a predictable output.

But some systems do not behave this way. Stephen Hawking spent much of his career analysing objects whose behaviour changed abruptly once they crossed certain thresholds. A black hole, for instance, is not simply a heavier star; it is a qualitatively different state of matter formed when gravity compounds on itself. Once the threshold is crossed, the behaviour of the system changes entirely: spacetime bends so sharply near a black hole, gravity becomes so extreme that spacetime itself is distorted. Time slows, light curves and familiar relationships break down. It is a regime where scale produces qualitatively different behaviour - the essence of non-linearity. Once a system crosses certain thresholds, you do not get “more of the same”; you get “something new”.

These non-linear transitions are rare in economics, yet they sit at the heart of today’s AI debate. Non-linear effects make technology unpredictable. They explain why certain thresholds feel insignificant until they suddenly redefine what is possible. Sam Altman at OpenAI speaks as if he has a clear vision for what AI could become and what scale of resources is needed to reach that point. His references to a 30 GW build-out have raised eyebrows and led some to question whether the entire ecosystem is drifting into a speculative bubble. But the relevant question is not whether 30 GW of compute capacity is “a lot” in a conventional sense. The question is whether such scale pushes the system across a capability boundary that then creates entirely new forms of demand. If that is the case, 30 GW may not be excessive; it may be insufficient.

Understanding the scale

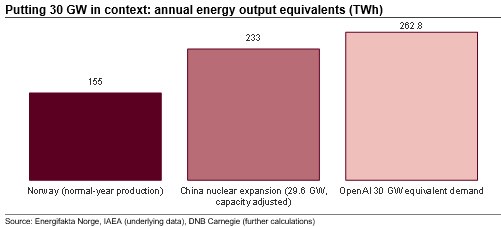

To understand the scale of 30 GW, it helps to compare it with a system we know well. Norway’s electricity system is large relative to its population, with almost 40 GW of installed capacity and annual production in a normal year of roughly 155 TWh. It is a system built over more than a century, shaped by unique hydrological conditions. When we compare Altman’s 30 GW ambition with a system of this size, the scale becomes easier to conceptualise.

To put this in perspective: if a 30 GW AI cluster ran continuously, it would require around 260 TWh of electricity per year, almost 70 percent more than Norway’s normal annual production. In energy terms, Altman’s plans would therefore massively exceed the output of the Norwegian power system.

This comparison is useful because it translates an abstract compute number into physical infrastructure: reservoirs, turbines, transmission lines and substations. It illustrates that a 30 GW cluster is not simply a ‘bigger data centre’ but an energy system on the scale of a small, highly developed industrial nation.

Another example: China is currently the most active country in building out nuclear power. According to the IAEA, it has 29 reactors under construction, which together will add 29.6 GW of capacity, or roughly 1 GW per reactor. On that basis, a 30 GW AI cluster would require the equivalent of around 30 modern nuclear reactors operating at full output.

Historical parallels

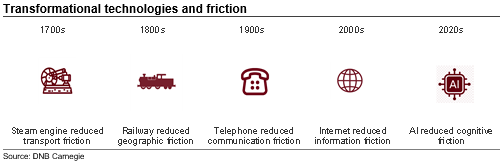

Throughout history, the technologies that have had the deepest economic impact have not been those that replaced individual tasks, but those that reduced friction in the movement of information, goods or people. The steam engine, railways, the telegraph, the telephone, the internal combustion engine and the internet all share the same underlying function: they expanded the scale at which societies could coordinate, allocate resources and make decisions. Each represented a step change in the efficiency of communication. They did not simply make existing processes faster; they altered what was possible to organise.

Economists often focus on productivity growth or job displacement when discussing such transitions. But the defining feature of these technologies is that they compressed distance, accelerated information flow and lowered the cost of interaction. This enabled entirely new forms of production, distribution and organisation. Railways produced national markets. The telephone produced real-time coordination across firms. The internet produced the modern services economy. At each stage, the economic value did not come from replacing workers one-to-one; it came from creating new sectors that could not exist before coordination costs fell.

Labour displacement vs new sectors

This historical pattern also clarifies the labour question. Major technological leaps have rarely produced large net job losses. Instead, they have displaced specific occupations while expanding the overall economic frontier. For example:

Railways displaced canal operators but created national logistics systems, time-zone coordination and mass retailing.

Electricity displaced steam-engine operators but created manufacturing industries with entirely new job categories.

Computers displaced clerical roles but created the software, consulting, digital advertising and e-commerce sectors.

The internet displaced some retail jobs but created global digital services markets that employ millions.

In none of these cases did long-run displacement emerge as the dominant outcome. The dominant effect was the creation of new coordination-intensive sectors, because the technology reduced friction to such an extent that previously impossible activities became viable.

This is why trying to estimate the future size of the AI sector by counting displaced jobs is likely to miss the point. The question is not how many workers AI replaces. The question is what new services and industries become viable when reasoning, prediction and communication can occur at a low marginal cost.

A shift from communication to cognition

If previous technological leaps reduced friction in communication, AI reduces friction in cognition. It does not merely move information more efficiently; it interprets, transforms and reasons about that information. One way to think about it is that it reduces the gap between what someone wants, knows or can decide upon and execute.

The transition is subtle but important. With railways or the internet, humans still performed the analytical work: processing information, making decisions, allocating resources and coordinating action. AI alters this by reducing the cost and speed of prediction, analysis and complex problem-solving. In short, the cost of understanding falls, decision-making becomes easier, and execution becomes cheaper.

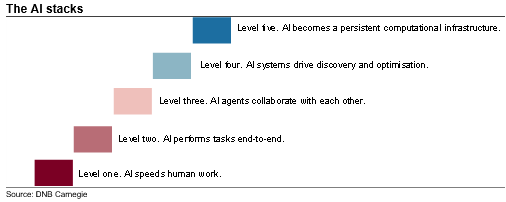

Stacking and thresholds

This dynamic leads naturally to what might be called stacking. Small improvements in AI capability unlock new use cases. These new use cases generate demand for more compute. Additional compute then improves capability again, unlocking yet further uses. The system compounds on itself. Unlike traditional technologies, where input and output scale proportionately, cognitive technologies evolve by crossing thresholds. A modest gain in model ability can enable applications that never existed at lower capability levels, creating entirely new demand curves.

What might this process look like in practice? One way of describing it is through a series of layered equilibrium shifts:

- First equilibrium. AI speeds human work.

- Second equilibrium. AI performs tasks end-to-end.

- Third equilibrium. AI agents collaborate with each other.

- Fourth equilibrium. AI systems drive discovery and optimisation.

- Fifth equilibrium. AI becomes a persistent computational infrastructure.

At each stage, existing capacity becomes insufficient, and a new layer must be built. The constraint shifts from software design to physical infrastructure: energy, land, transmission, cooling, materials and permitting. And because each new equilibrium enables more complex classes of tasks, the jump in required compute is likely to be disproportionate rather than incremental. If AI follows this trajectory, then 30 GW is not a destination but simply the level required to reach the next equilibrium.

Why evaluating the risks is so difficult

The difficulty in evaluating the risks associated with today’s AI capex boom stems from the fact that the system is shaped by thresholds rather than proportionate adjustments. In a linear system, investors can reasonably assess future demand by extrapolating from current usage. In a threshold-driven system, current demand tells us very little about what comes next. The relevant question is not how much compute the economy uses today, but what becomes possible once the next layer of capability is reached.

This makes the AI build-out unusual from a macro perspective. For most technologies, capacity is added to meet observed demand. In AI, capacity is partly built in anticipation of capabilities that do not yet exist. This reverses the usual order of analysis: instead of “demand drives capacity”, we move into a world where “capacity enables demand”. That makes the sector prone to both overbuilding and sudden surges in utilisation once a new equilibrium is reached.

A second challenge is that the constraints are not primarily financial or digital, but physical. Building tens of gigawatts of compute requires reliable energy, reinforced transmission networks, access to land, cooling systems, specialised materials and, crucially, permitting and political tolerance. These bottlenecks operate on timescales very different from software cycles, making the long-term outlook highly path-dependent.

A third source of uncertainty is social acceptance. Even if the engineering and capital are available, large-scale infrastructure must be approved, sited and integrated into local communities. Data centres consume significant amounts of electricity and water, alter land use, and often face pushback from residents and environmental groups. Whether society will accept the physical footprint of an AI-led economy remains an open question.

There is also a capability risk that sits alongside the physical constraints. The logic of threshold effects assumes that greater scale unlocks qualitatively new behaviour. But AI capability depends on two inputs: compute and data. While compute can be expanded through infrastructure investment, the supply of high-quality training data is harder to scale. It is conceivable that crossing into a new capability threshold could allow models to use existing data more efficiently, but this cannot be assumed. Over time, more data will naturally be generated as digital activity expands, but this is a gradual process and does not remove the near-term risk of capability stalling. If future model gains are instead constrained by data limits, algorithmic efficiency or diminishing returns to size, capability may plateau even as capacity expands. In that scenario, a 30 GW cluster would not function as a bridge to the next equilibrium, but as a large linear overbuild relative to realised demand.

Final thoughts

Taken together, these factors make it difficult to apply traditional investment frameworks to AI infrastructure. The risks go beyond overvaluation or misallocated capital to include the possibility that the physical systems required for the next equilibrium cannot be delivered fast enough. Non-linear systems do not scale smoothly. Past a certain point, inputs and outputs no longer behave in familiar ways, much like how gravity near a black hole bends time and space in ways that differ fundamentally from the rest of the universe. The central challenge for investors is that the system may change its character before the data needed to evaluate it becomes available.

Merk: Å kjøpe og selge aksjer innebærer høy risiko fordi verdien i verdipapirer vil svinge med tilbud og etterspørsel. Historisk avkastning i aksjemarkedet er aldri noen garanti for framtidig avkastning. Framtidig avkastning vil blant annet avhenge av markedsutvikling, aksjeselskapets utvikling, din egen dyktighet, kostnader for kjøp og salg, samt skattemessige forhold.

Innholdet i denne artikkelen er ment verken som investeringsråd eller anbefalinger. Har du noen spørsmål om investeringer, bør du kontakte en finansrådgiver som kjenner deg og din situasjon.